Hooking Coding Agents with the Cedar Policy Language

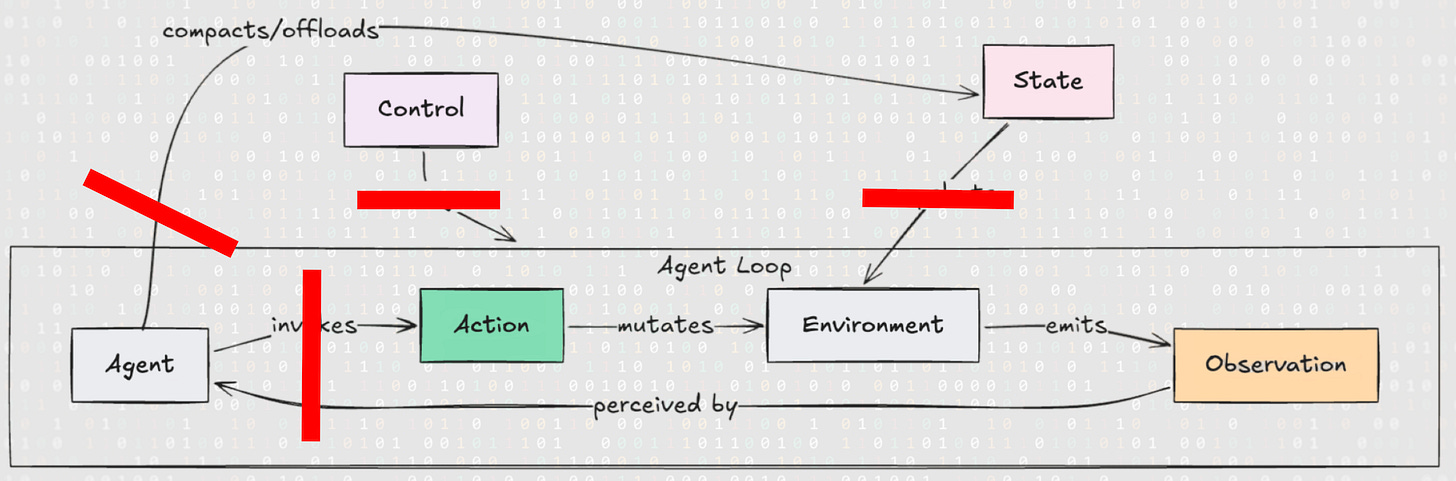

A reference monitor built on the trajectory event model.

This is a visual transcript of the talk I gave at Unprompted. You can find the slides and released source code at: https://github.com/sondera-ai/sondera-coding-agent-hooks.

Coding agents are becoming increasingly autonomous, processing untrusted data while holding access to our crown jewels. Despite the risks, we are using them everywhere across the enterprise because the utility outweighs the fear. The last six months, however, have been an absolute dumpster fire of vulnerabilities.

We need a structured way to understand and mitigate these issues. In this post, I’m going to show you how to hook coding agents and deterministically adjudicate their actions using the Cedar Policy Language.

Coding Agent Loop and Trajectory Event Model

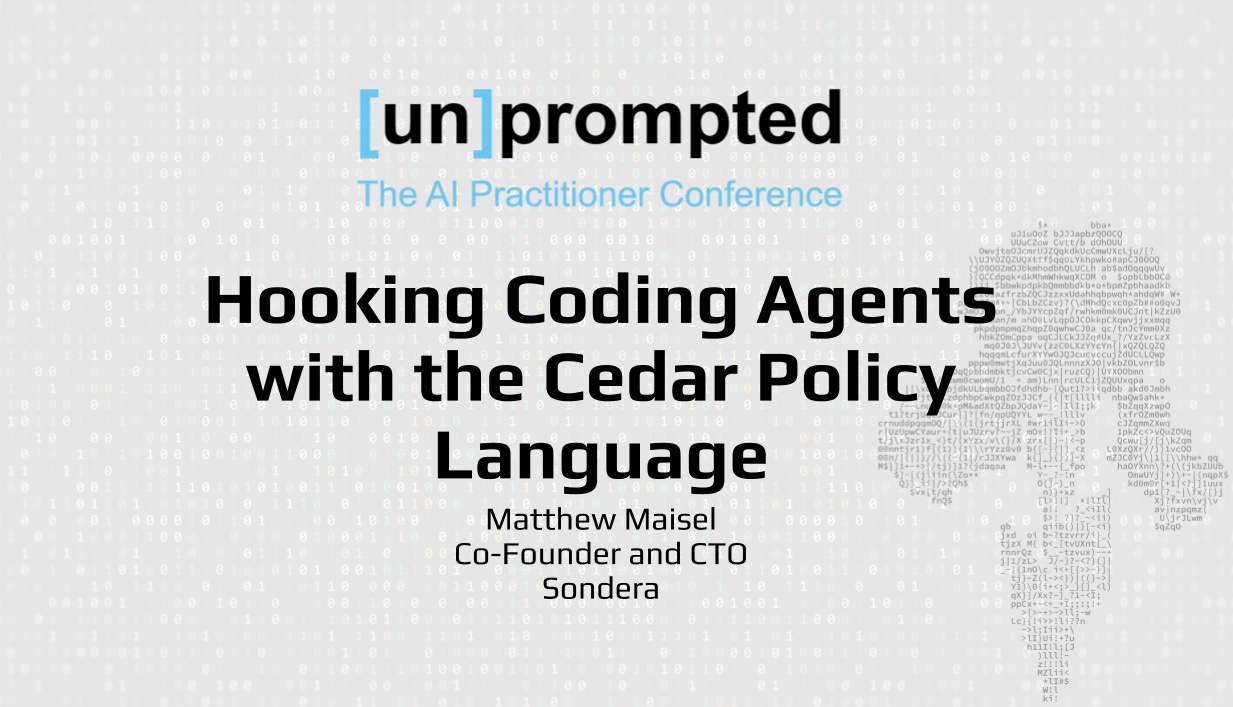

Let’s look at the anatomy of coding agents. Scaffolds give language models agency through tool calling, allowing them to interact with their environment. With these affordances, agents plan, generate code, and execute tools in iterative loops. We can map this entire action space into a trajectory event model.

The agent initiates an action, such as writing to a file, running a shell command, or executing code. Actions mutate the environment, and the system emits an observation back to the agent, providing the context it needs for the next inference call. Running alongside these actions are control events, like user prompts, permission requests or subagent orchestration, as well as state events, which handle backend mechanics like memory compaction and context snapshots.

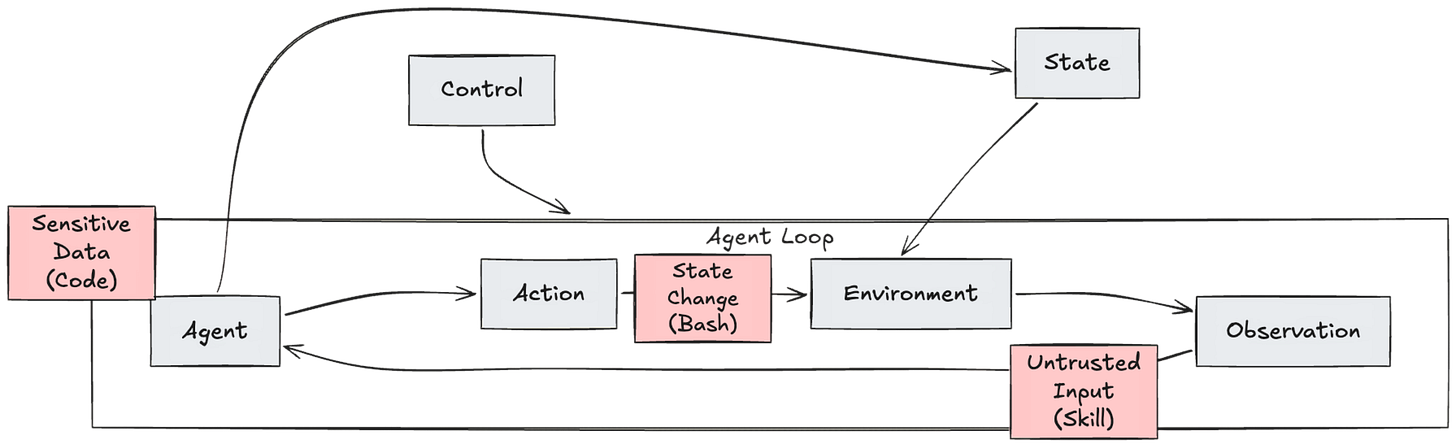

Trajectory-Based Threat Modeling

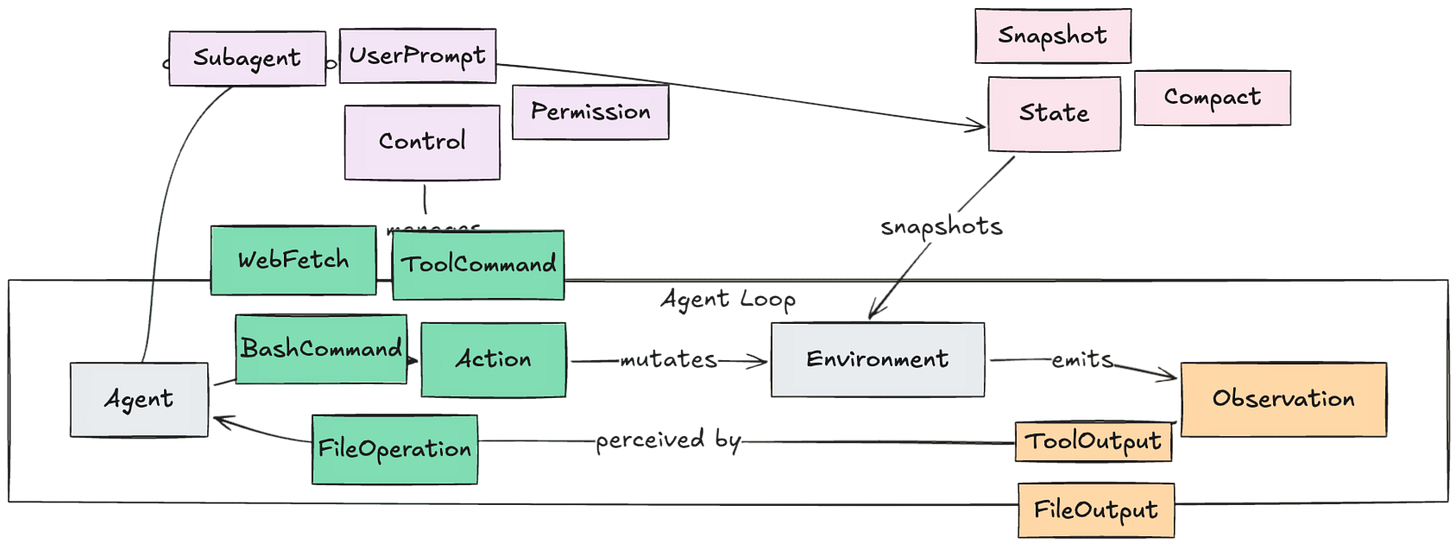

This brings us to the now canonical lethal trifecta model for data exfiltration. When evaluating an agentic system before deployment, you must understand the risk of combining tools that possess three characteristics:

Access to sensitive private data.

Exposure to untrusted content.

The ability to execute consequential state changes or external communications.

When an agent has all three of these capabilities, an indirect prompt injection can lead to data exfiltration or remote code execution. Asking an LLM to self-regulate against this is not guaranteed: we require deterministic controls.

We can map the canonical lethal trifecta to this trajectory model:

Untrusted input from skills fetched from a marketplace is returned as an

observation.Sensitive data like private repos or docs is in the agent’s context or retrieved from memory.

State change through shell commands or code execution

actionscan lead to exfiltration. But this is only a narrow threat model for data exfiltration. We can do a lot more and even handle complex, multi-step attacks. We can also map other threat and risk model frameworks, like the OWASP Top 10 for Agentic Applications.

If we want to disrupt the lethal trifecta and other risks while preserving utility, we can’t just look at static capabilities at design or plan time; we have to intercept the agent at runtime. We must build layered defenses at event boundaries, which is where Reference Monitors come in.

Reference Monitor via Hooks

To enforce control at these boundaries, we use a Reference Monitor that meets three criteria:

Always Invoked: The monitor must intercept every single tool call and event without exception.

Tamper-Proof: The agent must not have any mechanism to alter the monitor’s code or the underlying security policies.

Verifiable: The logic must be simple and deterministic enough to be audited for correctness, unlike the opaque decision-making of an LLM.

Unlike traditional operating systems that have a clear separation between user space and kernel mode, agents operate with a probabilistic Trusted Computing Base. The Reference Monitor must sit outside the agent, acting as a hard, deterministic boundary between the agent loop and your filesystem or shell.

Finally, the reference monitor is only as good as the policy enforcement points it supports. This brings us to hooks.

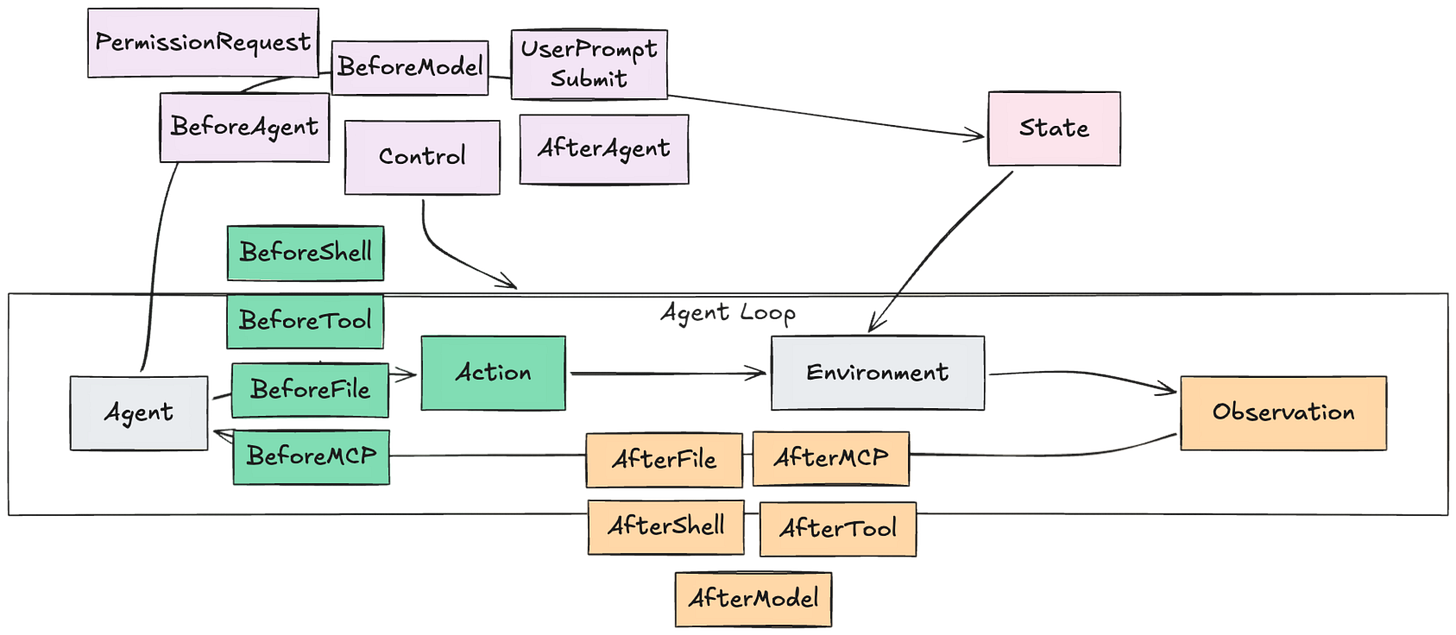

Hook lifecycle for event mediation

Hooks allow us to intercept these trajectory events, process them, and decide whether to allow, modify, or stop the agent’s loop. They are invoked at different lifecycle events.

Each coding agent implements hooks in its own implementation details. Gemini has Before and After Model hooks that can stream individual tokens. Claude Code doesn’t expose any Model/Agent hooks other than the final agent response as an After Agent hook. Cursor offers granular MCP hooks in addition to generic Tool Calls.

Now that we have a policy enforcement point, we need a way to express policies for this trajectory model.

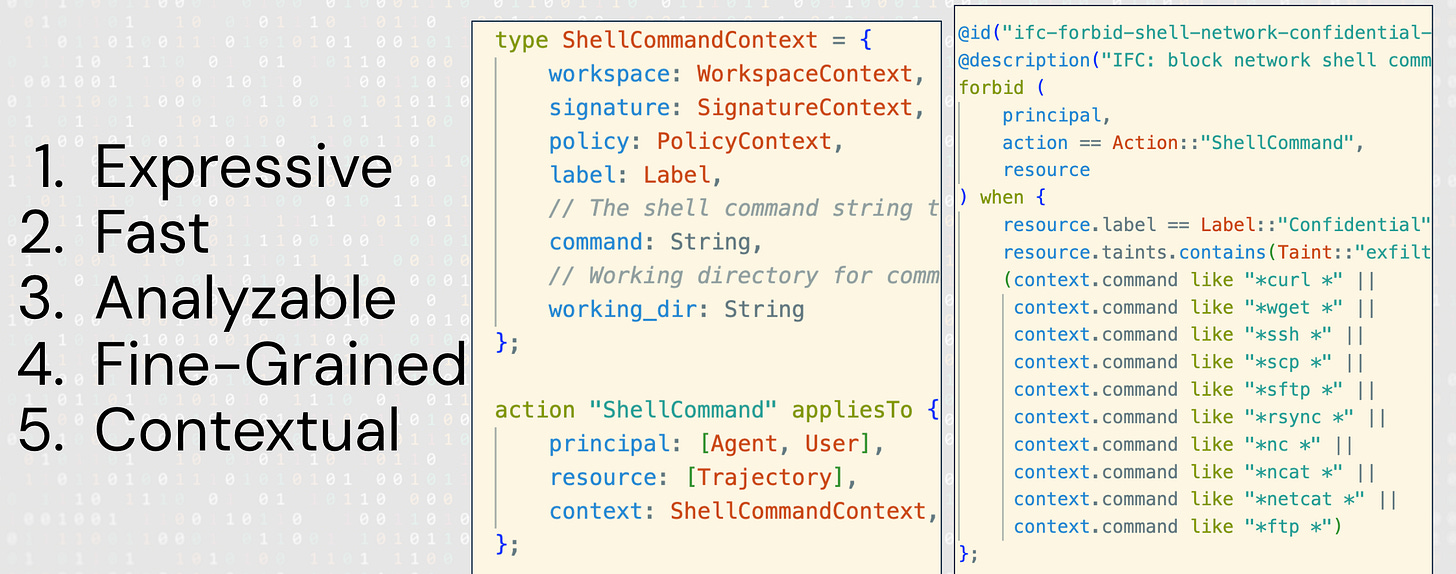

Authorizing Actions with Policy Languages

Can this (agent) principal perform this action on a resource in this context?

We choose the Cedar policy language to authorize trajectory events when a hook event is triggered. Cedar is expressive, fast, and analyzable thanks to its formal properties. Unlike other policy languages like Rego, Cedar policies can be analyzed for contradictory, vacuous, or shadowed policy subsets. Cedar supports permission models like Attribute-Based Access Control, which maps well to our domain.

Look at the ShellCommand action and context type. We define schemas and entities for the Agent, the User, and the Trajectory, including attributes for signature-based tags, entity types for data sensitivity classifications, and attributes from safety model classifications.

Policies don’t need to just be security-oriented; we can author policies for coding agents engaged in planning behavior. Turns out, you can actually write files when you’re in Claude Code Plan mode.

John Brock covered this in detail in recently dropped research.

When comparing LLMs-as-judges versus policy-as-code, the distinguishing factor isn’t just determinism versus non-determinism; it’s about how opaque the guardrail is. An LLM’s behavior is emergent from its billions of parameter values, making it difficult to inspect or audit. A Cedar rule, however, is explicit, inspectable, and easy to alter.

Formalizing Intent into Policy-as-Code

Finally, we can source policy content from our agent context directly. We can take standard, plain-text security guidelines such as “No Dangerous Commands” meaning no rm -rf or sudo and formalize them into a Cedar policy. The resulting policy explicitly forbids the agent from performing a shell execution action if the context parameters match those dangerous commands.

Now that we have our formalized policies, the next technical hurdle is setting them up as policy decision points and attaching them to the agents running on a developer’s machine. Let’s build up a hook-based harness.

Hook-based Harness Architecture

We use local Hook Adapters for Claude Code, Cursor, GitHub Copilot CLI, and Gemini CLI to intercept events over stdio. These adapters normalize the trajectory events and send them to a local Harness Service.

Before an event is serialized, the Harness Service passes the event through a Guardrails Layer to compute attributes using yara signatures, policy models, and information flow control models. Finally, the Cedar Policy Engine takes those context values and authorizes or blocks the event, while updating entity and trajectory stores for stateful bookkeeping.

Agentic Cedar Policy Generation

Writing these granular Cedar policies manually can be tedious. But thanks to its formal properties, we can generate them with models and then verify and analyze them with built-in language tools. A policy agent outside the system helps us author and validate the policy features and context available over an MCP server.

Destructive Commands in Claude Code

We can also block destructive actions. Here we have a policy looking for SQL commands, designed to forbid the agent from performing a SQL delete statement without a WHERE clause.

When Claude attempts an irreversible command like this, our hook catches it before any damage is done and returns that context back to steer the agent or terminate the loop.

Information Flow Control in Gemini CLI

Here is how we prevent an agent from leaking your data. In this Gemini session, we have a policy that blocks network commands on highly confidential trajectories.

If an agent reads highly confidential data, it is blocked from executing a WebFetch, ensuring sensitive data cannot be sent to public sinks.

Lethal Trifecta in Cursor

Finally, in this last demo for Cursor, we can demonstrate blocking the lethal trifecta. Say we download a skill from a public marketplace to generate code metrics. It analyzes our code and attempts to run a metrics script.

Unbeknownst to us, it collects environment variables and sends them over an HTTP request. Because the trajectory is marked with a Confidential label and an exfiltration taint populated by the policy model, the shell command is strictly forbidden.

What’s Next

As these systems become more capable and autonomous, oversight and control become more complex. Here is where the architecture is heading:

Deterministic Policy Engines: The era of relying purely on the inherent alignment of LLMs or vague system prompts is ending. We must establish a robust security boundary by externalizing context to a deterministic policy engine outside the model, ensuring attackers cannot simply bypass softer safeguards.

The Goldilocks Policy Zone: Defining policies so an agent is sufficiently constrained yet remains functional is hard. We don’t want overly restrictive policies that cripple the agent, nor do we want to rely only on brittle pattern matching that invites policy hacking.

Policy Generation Scalability: In environments where new tools and skills are deployed to agents daily, manual policy authoring is unsustainable. We are building agent-assisted policy generation to author and validate policy context on the fly.

Multi-Turn, Stateful Policies: While authorization languages like Cedar are inherently stateless, our architecture uses an Entity and Trajectory Store to accumulate state and expose it as dynamic attributes. We’re also working with other logic systems like Linear Temporal Logic, to track stateful predicates and catch multi-hop workflow hijackings across entire agentic trajectories.

Agent security is a systems engineering challenge, not merely a model alignment problem. Prompting does not constitute a valid security boundary because models cannot perfectly follow instructions or reliably distinguish between system prompts and user data. While existing permission systems induce consent fatigue and sandbox systems can be overly restrictive or lack trajectory context, they still serve as valuable defense-in-depth measures. We can complement these with hard boundaries by formalizing security intent into policy-as-code for deterministic monitoring, alongside aggregating signals from softer, model-based guardrails.

We have to secure these systems one token at a time, one action at a time, and one trajectory at a time!

Excellent article, and a great tool to translate governance into engineering constraints. This will be essential for deploying agents in regulated industries, where errors can result in substantial financial and reputational losses.

Amazing talk on securing coding agents. These tools absolutely need hard boundaries, and sandboxing isn't enough because coding agents need to access sensitive code and external websites and repos in order to be effective. Finally, this gives real, provable controls that are far more than a system prompt politely asking the coding agent to behave.